Deep Research just got more controllable – and that’s the point

AI research tools are only as useful as the sources they’re built on. If the inputs are noisy, biased, or outdated, the output looks confident but drifts away from what you actually need. That’s why the latest improvements to Deep Research in ChatGPT matter: they’re less about “more AI” and more about better governance–clearer sourcing, tighter focus, and easier accountability.

OpenAI’s update gives users two big levers: more control over which websites Deep Research uses and a larger collection of connected business apps you can draw from as trusted inputs. Together, these changes turn Deep Research from a clever summariser into something closer to a repeatable workplace workflow.

Control the sources, control the risk

The most meaningful change is the ability to focus research on specific websites. In practice, that means you can steer a report towards the places your team already relies on–government and regulator sites, standards bodies, vendor documentation, technical knowledge bases, or sector associations.

You can add multiple sources and either restrict research to only those sites or ask ChatGPT to prioritise them while still allowing broader web search. That sounds like a small feature, but it changes the quality of the conversation you can have with the output. Instead of asking “is this true?”, you can ask “does this align with the policy/standard/source we trust?”

For SMEs, this is especially valuable because the biggest barrier to using AI at work isn’t capability–it’s confidence. When you can point the research at trusted references, the review process becomes faster and the report becomes easier to defend internally.

Connect the apps that power the business

The second shift is about specificity. Deep Research can now use a larger collection of connected apps as trusted sources, which means your reports can reflect the platforms and data providers you actually use day to day. This is where research stops being generic and starts being actionable–particularly for finance, operations, procurement, and commercial teams.

Think of it as moving from “what does the internet say?” to “what do our systems and our trusted sources say?” That’s the difference between a report you skim and a report you can use to make a decision.

A better review experience isn’t cosmetic – it’s operational

OpenAI has also redesigned the Deep Research experience so it’s easier to manage in one place. A fullscreen report view, clearer navigation, and a more structured workflow help you plan research before it runs, monitor progress, and adjust direction mid-way through. The practical benefit is simple: less rerunning, less copy-paste, and fewer dead ends.

When research can take time, being able to steer it while it’s running, by adding sources or refining the focus turns the tool into something that fits real working patterns.

Where this matters most (and why)

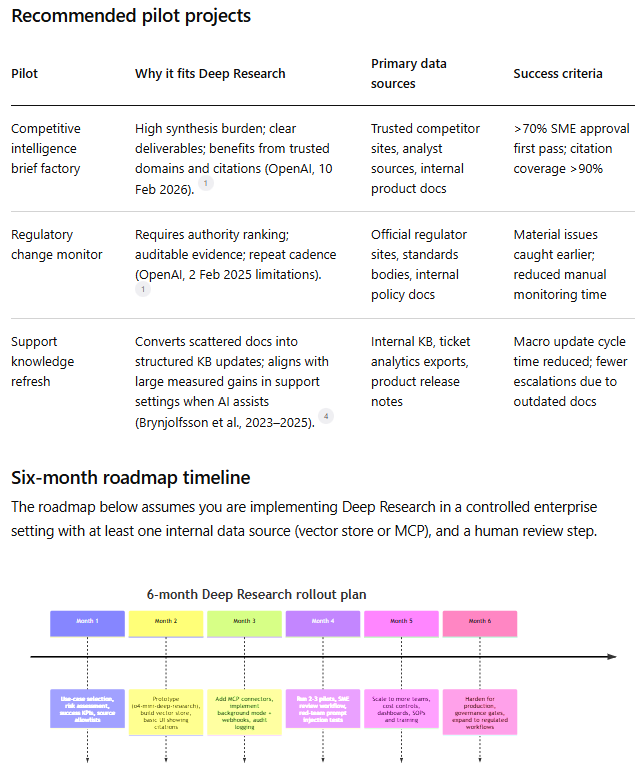

If you’re wondering whether this is “nice to have” or genuinely useful, here are three situations where source control and connected apps make an immediate difference:

1) Compliance and regulation

Prioritise government and regulator sites so the report is anchored in primary sources. That reduces the risk of citing secondary summaries or outdated interpretations.

2) Technical evaluation and procurement

Steer research towards vendor documentation, standards bodies, and credible technical references. This keeps the output focused on implementation details rather than marketing claims.

The bigger takeaway: trust is a feature

The most important trend here is that AI tools are shifting from “answer engines” to controlled systems, where you can shape inputs, audit outputs, and repeat results. For teams that care about accuracy, compliance, and speed, this is exactly the direction you want.

If you’re exploring how to use AI to automate report generation and reduce admin-heavy research, the winning approach is simple: pick the sources you trust, connect the apps that matter, and standardise the workflow.

Want to put this into a repeatable workflow?

At gecco, we help SMEs turn tools like this into reliable day-to-day processes–so research doesn’t live in a chat window, it feeds the work (briefs, docs, approvals, and next steps). If you want, we can help you design a lightweight “trusted sources” research setup and the handoff into your team’s workflows.

Google's faster, cheaper AI arrives for SMEs

Google's I/O 2026 keynote introduced Gemini 3.5 Flash and Antigravity 2.0, offering UK businesses a faster, more affordable route to AI automation. This article explains what changed, why it matters for SME owners and IT managers, and what you can do about it this week.

Google AI Studio adds free app deployment

Google has updated AI Studio with direct Workspace integrations and a free cloud deployment tier for app builders. UK SME developers and IT teams can now prototype and deploy internal tools without manual API configuration or upfront costs.